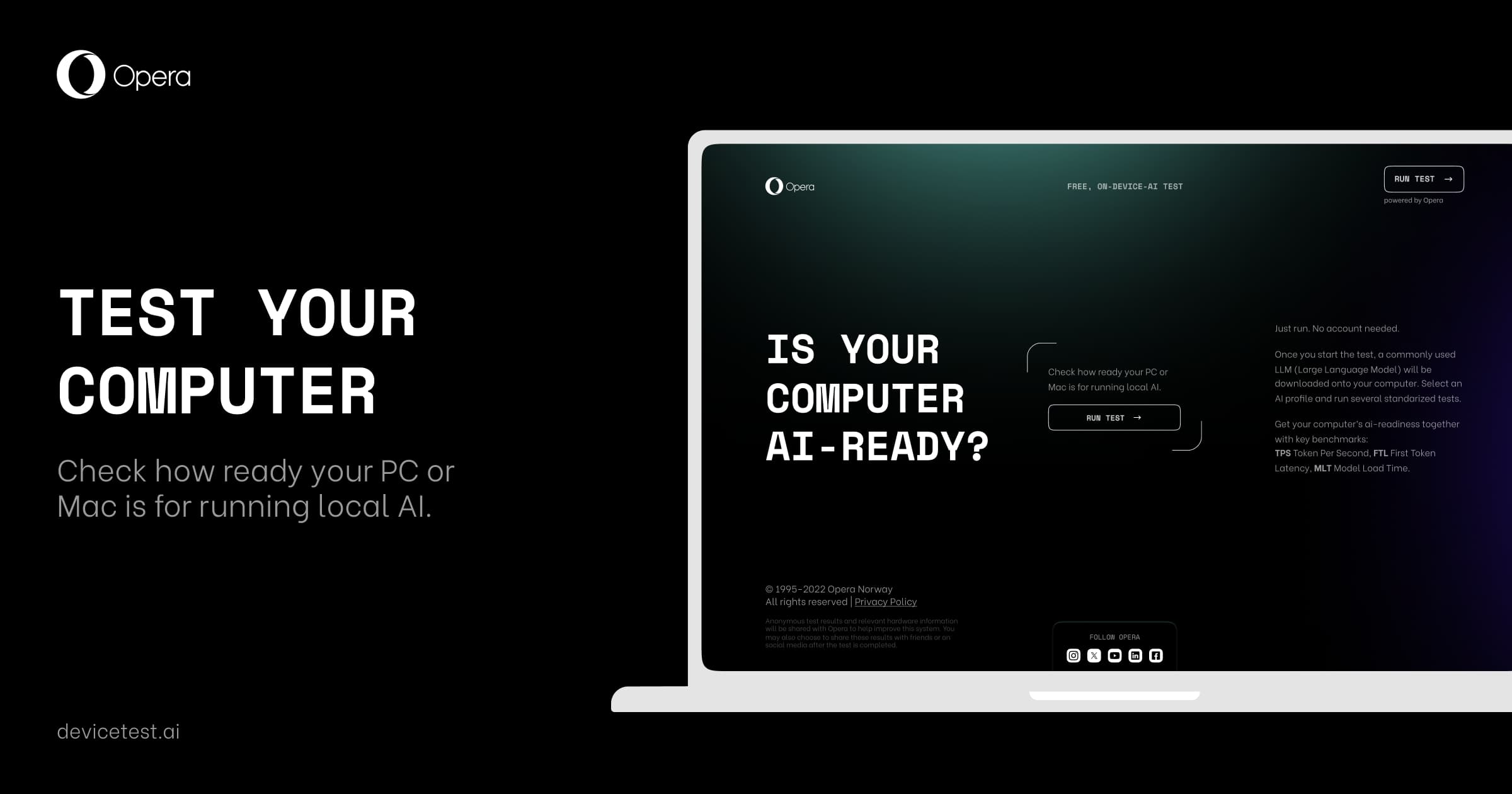

The navigator Opera it received benchmark tool called devicetest.ai. This tool is the first in the world to be made available to the general public and can be accessed directly through the browser. devicetest.ai allows users to check their devices’ ability to run Artificial Intelligence (AI) locally.

Available in the latest version of Opera Developer, the first browser to support embedded local language models (LLMs), the online platform marks a significant step in integrating AI into everyday device use. Running AI locally brings benefits such as new usage possibilities and improvements in user privacy, which eliminates the need for communication with external servers. However, this also subjects devices to new performance challenges.

How does devicetest.ai work?

To use devicetest.ai, users must follow a few simple steps:

- Access the specific link using the Opera Developer.

- Read the relevant information and click “Run Test”.

- Choose a test profile, which will determine which local AI model will be downloaded and used.

- Start the test.

The available test profiles vary depending on the capabilities required by the AI model. Depending on the system specifications and the chosen profile, the test can last from 3 to 20 minutes. Once complete, the results are displayed and can be shared or downloaded as a CSV file for further analysis.

Metrics evaluated

Results are color-coded for easy interpretation:

- Verde indicates that the device is AI ready.

- Yellow means the device is AI functional.

- Red points out that the device is not AI-ready.

The main metrics evaluated by the tool are:

- Tokens Per Second (TPS):

- Definition: TPS measures how many words the language model can process per second. In the context of LLMs, a “token” can be a word or a fraction of a word.

- Importance: This metric is key to evaluating the efficiency and speed at which the device can process large volumes of text, which is crucial for AI applications that rely on fast and accurate responses.

- First Token Latency (FTL):

- Definition: FTL measures the time it takes for the model to generate the first word after receiving a command or prompt.

- Importance: Latency is a critical factor in AI applications that require real-time interaction. Lower latency means faster responses, improving the user experience, especially in interactive tasks like chatbots and virtual assistants.

- Model Load Time (MLT):

- Definition: MLT measures the time it takes to load the language model into the device’s RAM when it boots for the first time.

- Importance: This load time impacts the system’s readiness to perform AI tasks. Models that load faster allow you to start your work more quickly operations, which is important for applications that require frequent launches.

Test details

The benchmark tool performs the tests multiple times to ensure consistency of results. Each test evaluates how the device handles different tasks that a local AI model would normally perform. The average of the repetitions is used to provide a reliable result. Additionally, the feature displays system specifications and allows users to see which hardware components are being most stressed during evaluations.

Privacy and sharing

A Opera ensures that the data used in the test is only to produce the results and allow comparisons with other users. No personal information is collected, and no data is associated with specific users, IP addresses or other identifiers.

To access the benchmark platform, you need to download the Opera Developer. More information about privacy practices can be found in the Privacy Statement of Opera.

“As at devicetest.ai, a Opera offers a unique and affordable tool for anyone interested in checking their devices’ readiness for the AI era. This launch represents a significant advance in the democratization of AI technology, making it available to everyone”, highlights the company in a statement.

Source: https://www.hardware.com.br/noticias/2024-06/opera-lanca-primeira-ferramenta-de-benchmark-de-ia-direto-no-navegador.html